"It's not what happens to you, but how you react to it that matters."

Date: 15th March 2026

Hey AI enthusiast,

AI research got 100 times faster, Meta's flagship model isn't ready, and a $650 billion spending wave just developed a visible crack. This week's three stories cover the full spectrum: a breakthrough, a setback, and a warning. Grab a coffee. It's a good one.

In this edition

PS: If you want to unleash the power OpenClaw AI agents to grow your business, setup time speak to me, here»

Andrej Karpathy Just Automated AI Research Overnight 🤖

One GitHub repo dropped this week and the internet hasn't stopped talking about it.

Andrej Karpathy released AutoResearch, a system where you write plain instructions in a Markdown file and an AI agent handles everything else. It edits training code, runs experiments, checks the results, and keeps whatever works. You wake up to a list of improvements backed by real data.

Think of it like leaving a very capable intern at your desk overnight, except this one runs 100 experiments before your alarm goes off.

Here's how it actually works:

- You write high-level goals in plain English inside a Markdown file. Things like "try bigger models" or "test new optimizers." That's your entire job.

🔁 The AI agent takes over from there. It writes and edits the actual training code, then kicks off a training run on one GPU for exactly five minutes.

- Each experiment gets the same five-minute budget. It doesn't matter how complex the idea is. Every run gets equal time, which keeps the comparison fair.

⚖️ The system scores each run by a single metric: validation loss. Lowest score wins. The better version gets saved, the worse one gets deleted.

- This loop runs all night in its own git branch. The system completes roughly 12 experiments per hour and hits around 100 full runs by morning.

🌅 You wake up to a set of real, data-backed improvements. No debugging. No late nights. Just results waiting for review.

Why this matters for anyone building with AI:

- One person with one GPU can now run what used to require a full research team. The barrier to serious AI experimentation just dropped hard.

🎯 Prompt quality has become the core skill. You're no longer writing code for experiments. You're writing instructions for a system that writes its own code.

- The GitHub repo crossed 33,800 stars within days of launch. That's the community sending a clear signal.

So what?

The takeaway is simple. AI research just got 100 times faster and open to anyone willing to write a good prompt. This technique is extensive; it can be used for optimizing your conversion on your website or improve your Ads and so on.

The lab coat is optional. The Markdown file is not.

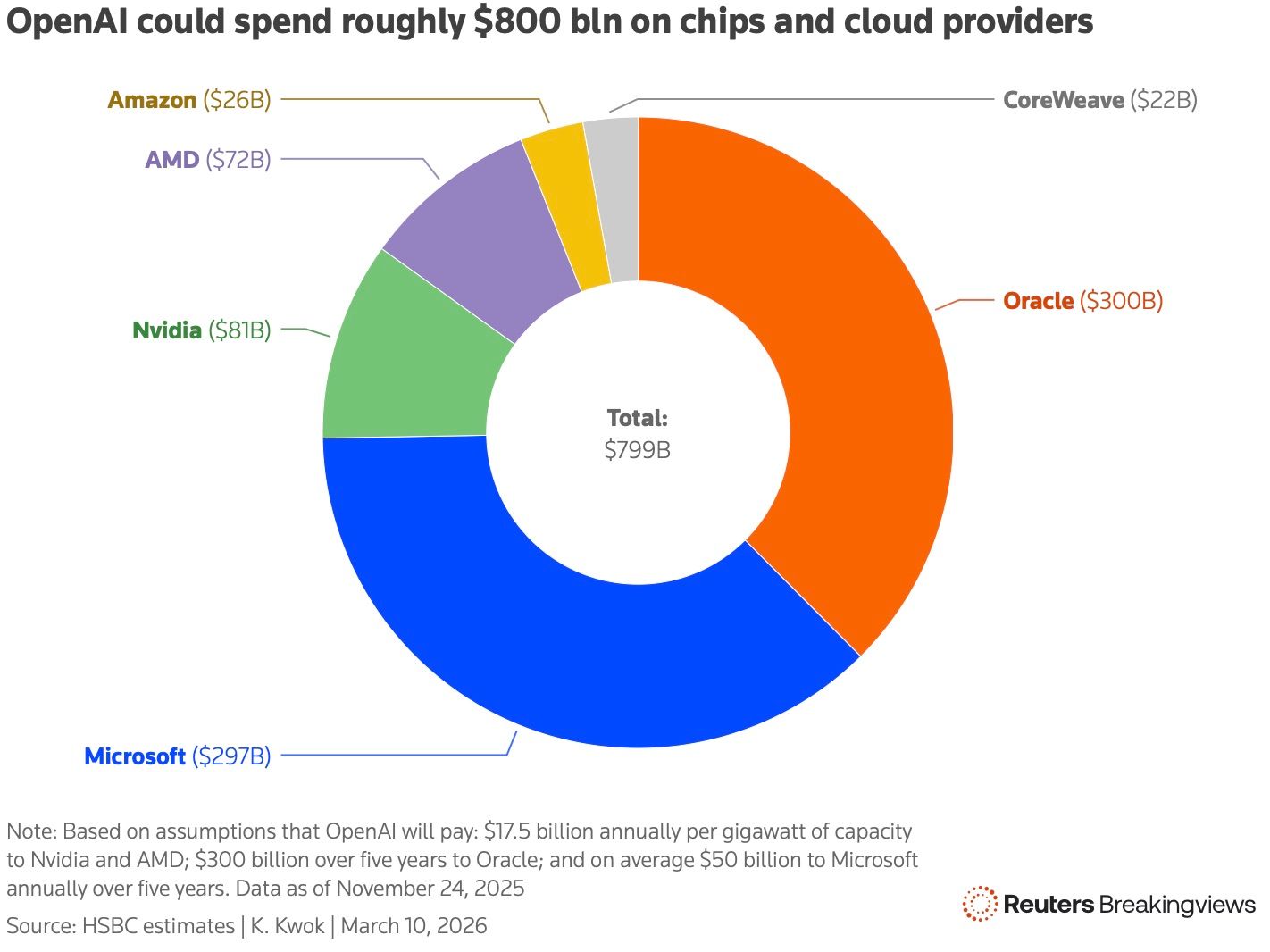

What happens when the two companies holding up a $650 billion spending wave start to wobble?

That's the question Reuters Breakingviews put on the table this week. And the answer isn't pretty.

The Scale of the Bet

Big Tech didn't just invest in AI. It went all in.

Alphabet, Amazon, Meta, and Microsoft committed roughly $650 billion in spending this year alone, mostly on data centers to power AI chatbots. That's about 2% of U.S. GDP. Morgan Stanley projected $2.9 trillion in global data center investment between 2025 and 2028, with about $900 billion coming from private credit and asset-backed lending.

The entire machine depends on two companies staying solvent: OpenAI and Anthropic.

Source Reuters

💸 The Math Doesn't Add Up (Yet)

Here's where it gets uncomfortable.

Anthropic spent over $10 billion training models and answering queries. Total revenue generated: roughly $5 billion. That's a 2-to-1 burn ratio on cumulative costs versus earnings. For OpenAI, HSBC estimated nearly $280 billion in cash burn between now and 2030, and the company may need $207 billion in additional financing to get there.

OpenAI's recently announced $110 billion funding round sounds reassuring. But look closer. Amazon pledged $50 billion, yet only committed to an initial $15 billion upfront. The rest is tied to OpenAI either going public or hitting an artificial general intelligence milestone. SoftBank, expected to chip in $30 billion, was recently flagged by S&P Global with a negative credit outlook.

That's not a safety net. It's a conditional promise.

🏗 The IPO Gamble

Both companies explored IPOs as soon as this year, hoping public markets could replace the private funding engine.

For that to work at a $1 trillion valuation, OpenAI would need to reach at least $250 billion in annual revenue by 2030. Internal targets reportedly pushed even higher, to $280 billion. To put that in perspective: it's the size of today's Microsoft, built from scratch in four years.

OpenAI's annualized revenue hit $25 billion by the end of February, up 25% from December. That's real growth. But open-source competitors are flooding the market and pressing prices down fast.

⚠️ One Lab Fails, Everyone Feels It

Microsoft quietly disclosed that 45% of its $625 billion demand backlog is tied to OpenAI. Oracle and Microsoft shares dropped after both companies confirmed plans to keep increasing capital spending. Credit-default swaps on Oracle reached their highest levels since 2008.

If either lab stumbles, the effects wouldn't stay contained. Nvidia chip sales would slow. Power infrastructure investments would sit underused. Lenders would face losses across a sprawling, interconnected web of deals.

Anthropic also carries fresh political risk. After CEO Dario Amodei refused to let the company's AI be used for mass surveillance or autonomous weapons, the Trump administration labeled it a supply-chain risk. Anthropic sued to fight back, while cloud partners Amazon, Alphabet, and Microsoft said they'd continue offering its products to customers.

A rescue buyer would likely emerge if either company collapsed. But that scenario would come with a sharp valuation reset and a rethink of how much compute the industry actually needs.

🧠 What This Means for Founders

The AI infrastructure boom built a capital ecosystem unlike anything seen before. But ecosystems this interconnected are also fragile.

If you're building on top of these platforms, now is a good time to think about concentration risk. Which providers, which models, which compute dependencies sit at the center of your product? The next 18 months will test whether the AI investment thesis holds up under pressure.

The boom isn't over. But the era of "growth solves everything" is starting to get some hard questions.

AI & Error: Full Delegation, Finally

I said it wasn't possible yet. I was wrong about how soon it would become possible

In my very first column, I told you what I was looking for: full delegation. Hand something to AI and forget about it. I also told you we weren't there yet.

I was wrong about how long it would take before the wish came true.

As of last week, every morning, a briefing lands in my inbox. It begins with the weather forecast for home and work. Then, top news stories across world news (heavy US focus), India, tech and AI, business, science, and sports, with seasonal awareness baked in. Then a curated Explore section of longform pieces that I might want to read. And last, a Deep Dive section if I have more time. Since I commute most days now, there’s also audio narration I can listen to while driving.

The whole thing is prepared while I’m asleep and waiting at the top of my inbox.

How I got there

The core engine is a Python script triggered by GitHub Actions on a daily cron schedule. Every morning, it wakes up, fetches weather from Open-Meteo, pulls NBA scores from the ESPN public API, then hands off to Claude for the heavy lifting: search the web across a curated source list per category, cross-reference stories, synthesize summaries, flag subscriber-only sources so I know when I have access. Then Deepgram narrates the whole thing, Resend delivers it, and GitHub commits the output and cleans up files older than seven days.

No servers. No hosting costs. The only real expenses are Claude and Deepgram — a few dollars a month combined.

I built the whole pipeline in Claude Code. This is now the third substantial system I've shipped this way, and the pattern is the same every time: describe what you want, scrutinize the result, iterate to refine. The syntax isn't the bottleneck. The thinking is.

The Explore section: a philosophy problem disguised as a feature

Early versions of the news brief had an Explore section that was reliably empty.

The problem was a logic error I'd borrowed from news: I'd constrained searches to content published today. That works fine for headlines. It's the wrong frame for the kind of content I actually wanted in Explore — longform essays, reported features, deeply researched pieces from WIRED, Quanta, Aeon, MIT Technology Review. That kind of writing isn't published daily. A constraint built for breaking news was systematically filtering out everything I actually wanted to read.

The fix was to rethink what Explore is for. News surfaces what happened. Explore surfaces what's worth your time — and those are different jobs. Once I separated them conceptually, the implementation followed: a two-week window instead of today-only, a broader and deliberately curated source pool, and a bias toward pieces that reward slow reading rather than fast scanning. The section also prioritizes sources I already pay for — The Economist, NYT — so I'm actually getting value from subscriptions instead of letting them quietly lapse into habit.

The result is a section that feels less like a news feed and more like a recommendation from a well-read friend who knows your interests. That was the goal. Getting there required being precise about what problem it was actually solving.

The Deep Dive queue: solving the backlog problem

Everyone has a pile of "I'll read this later" links. Mine lived in a browser tab graveyard — accumulating for months, never actually cleared.

I added the Deep Dive section to the brief to surface one item at a time: one thing to read, one thing to listen to. It also lists how many days it's been in the queue. After seven days, a nudge appears. That's the whole mechanic, and it's doing more work than I expected. The constraint is the feature. One item at a time means I'm never overwhelmed. The day counter makes the backlog visible without burying me in it. The nudge is accountability without nagging.

The harder problem was where these items come from — and how they get into the brief.

Leo, my AI Chief of Staff, lives in a Claude Project. I talk to Leo throughout the week, including on mobile using voice mode, which works well for capturing things in the moment. When I come across something worth reading, I tell Leo. Leo files it. That's a natural workflow, and I wanted to keep it — Leo already knows my reading patterns, my current interests, my backlog. It made sense to curate the queue there.

But Claude Projects can't write to GitHub. And the Daily Brief runs as an automated pipeline that has no awareness of my Claude Projects. These two systems don't share any native connection.

The solution was to make the handoff deliberate rather than automatic. At the start of each Claude Code session, I run a single command that reads Leo's current lists and pushes them into the repository's state file, where the Daily Brief can find them. It's one step, takes seconds, and keeps both systems in sync without requiring any direct integration between them. (for the full article, including link to the Github repo to build your own Chief of Staff, go to the web edition: https://www.onemorethinginai.com/p/karpathy-ai-intern-ran-100-tests-meta-blinked-wall-street-nervous-about-ai).

This pattern is worth thinking about more broadly. As you build AI systems that serve different purposes — one for thinking and planning, one for automated execution — you'll hit this problem. The systems don't talk to each other natively. The solution isn't always a technical integration. Sometimes it's a deliberate handoff point: a moment where you explicitly move information from one context into another. That handoff can be manual and still be robust.

The slash command I built for this isn't interesting because of its syntax. It's interesting because it represents a design decision: rather than trying to connect two systems that weren't built to connect, I created a defined transfer point. One step, explicit, human-initiated. The brief gets fresh data. Leo stays separate.

The question I keep coming back to

In Week 4, I wrote about building intelligence before infrastructure. Leo's early failures came from clever decision logic sitting on top of unreliable data. The Daily Brief is the opposite: boring infrastructure first, intelligence only where it genuinely adds value.

Claude handles the search and synthesis — reading across sources, cross-referencing stories, writing coherent summaries in six categories. Everything else runs on free APIs and a cron job. That ratio feels right. AI does the thing that's genuinely hard for humans to do at scale. The plumbing is just plumbing.

Full delegation works when you're precise about what you're delegating. I'm not handing off my judgment about what matters — I built the source list, the categories, the curation philosophy. I'm delegating retrieval, synthesis, and delivery. That's a narrower claim than "AI does my morning." It's also the one that's actually true.

Start here to try building your own: https://nisha-pillai.com/daily-brief (this has the code and full guide on how you can do it)

Nisha Pillai transforms complexity into clarity for organizations from Silicon Valley startups to Fortune 10 enterprises. A patent-holding engineer turned MBA strategist, she bridges technical innovation with business execution—driving transformations that deliver measurable impact at scale. Known for her analytical rigor and grounded approach to emerging technologies, Nisha leads with curiosity, discipline, and a bias for results. Here, she is testing AI with healthy skepticism and real constraints—including limited time, privacy concerns, and an allergy to hype. Some experiments work. Most don't. All get documented here.

Meta's AI Avocado Isn't Ready for the Table🥑

Mark Zuckerberg promised to push the AI frontier. The kitchen is running behind.

Meta's next big AI model, code-named Avocado, missed its March release target. Internal tests showed it falling short of leading models from Google, OpenAI, and Anthropic on reasoning, coding, and writing tasks. The model beat Meta's own previous work and Google's Gemini 2.5 from March. But it couldn't keep up with Gemini 3.0 from November.

The release got pushed to at least May. And in the meantime, Meta's leaders reportedly discussed licensing Google's Gemini to power their own AI products while Avocado catches up.

Here's what's happening inside Meta's AI push:

- After Llama 4 fell short last year, Zuckerberg recruited Alexandr Wang, the 29-year-old CEO of Scale AI, as Meta's new chief AI officer. Meta invested $14.3 billion in Wang's company to make the hire happen.

🏗️ Wang built a new internal AI lab called TBD Lab, staffed with about 100 researchers. The lab started working on two models: Avocado for language tasks and Mango for image and video generation.

- TBD Lab finished Avocado's pre-training phase by the end of last year. Post-training began in January, when the team set a mid-March release window. That window has since closed.

🎬 The only product TBD Lab has shipped so far is Vibes, a video app in the style of OpenAI's Sora.

- Meta has spent heavily to compete. The company committed $600 billion toward data centers and projected spending up to $135 billion this year, nearly double what it spent last year.

⚖️ Internal tension has surfaced too. Wang clashed with Meta's chief product officer and chief technology officer over how the new models should support the company's advertising business.

- After rumors spread that Zuckerberg and Wang had a falling out, Meta moved fast to shut the story down. Zuckerberg posted a selfie with Wang on Threads with the caption "Meanwhile at Meta HQ."

🍉 Meta's leaders are already planning the model after Avocado. They've named it Watermelon.

The bigger picture for anyone watching the AI race:

- Frontier model development is expensive, slow, and unpredictable. Even companies spending hundreds of billions can miss their own deadlines.

🏁 Google, OpenAI, and Anthropic have built leads that aren't easy to close. Meta's gap isn't fatal, but it's real.

- Being second to the frontier matters more than people admit. It affects recruiting, product momentum, and investor confidence.

The Avocado is still ripening. Whether it arrives in May ready to compete, or needs more time on the counter, will tell us a lot about how sustainable Meta's AI ambitions really are.

Three Posts that caught my eye on X

1. Anthropic powers ahead- for now.

2. Artificial Brain cells play Doom.

3. Figure Robot gets better at cleaning rooms!

📚 Sources & Further Reading

What Happens if OpenAI or Anthropic Fails — Karen Kwok, Reuters Breakingviews Read via newsletter link

Karpathy Unleashes AutoResearch — Andrej Karpathy, GitHub View via newsletter link

Meta Is Not Ready to Serve Avocado — Eli Tan, The New York Times Read via newsletter link

How did you like this edition?

- Love it |

- Ok |

- Thumbs down